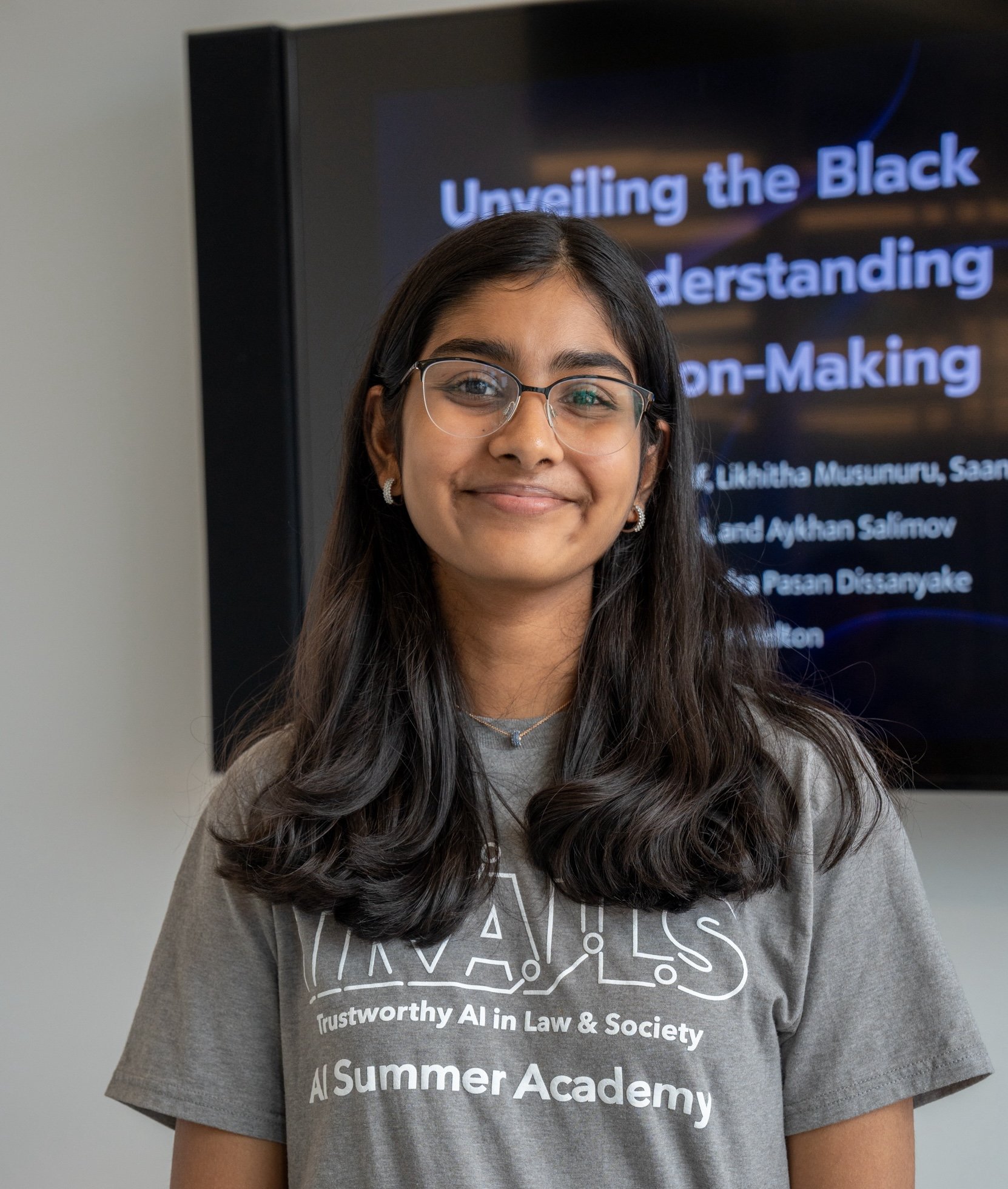

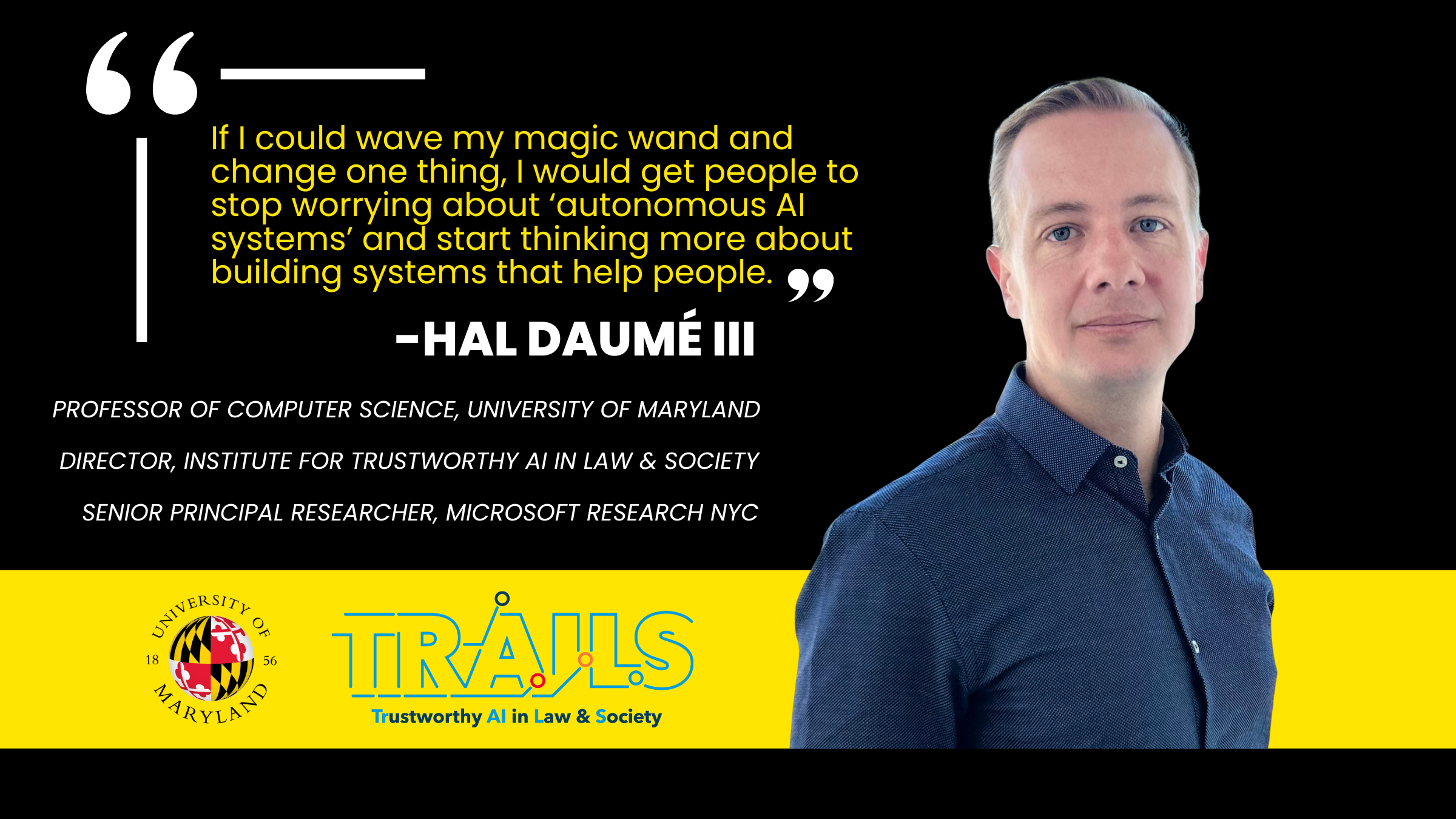

Hal Daumé III, Director

Professor of Computer Science

University of Maryland

Areas of Expertise: Fairness and Natural Language Processing (NLP)

Hal Daumé III is a professor of computer science with appointments in the Maryland Language Science Center and the University of Maryland Institute for Advanced Computer Studies, where he is also the director of TRAILS. In addition to fairness and natural language processing, his research focuses on understanding computational properties of learning and language as well as trustworthy AI.

-

Fleisig, E., Amstutz, A., Atalla, C., Blodgett, S. L., Daumé III, H., Olteanu, A., Sheng, E., Vann, D., & Wallach, H. (2023).

Abstract: It is critical to measure and mitigate fairness-related harms caused by AI text generation systems, including stereotyping and demeaning harms. To that end, we introduce FairPrism, a dataset of 5,000 examples of AI-generated English text with detailed human annotations covering a diverse set of harms relating to gender and sexuality. FairPrism aims to address several limitations of existing datasets for measuring and mitigating fairness-related harms, including improved transparency, clearer specification of dataset coverage, and accounting for annotator disagreement and harms that are context-dependent. FairPrism’s annotations include the extent of stereotyping and demeaning harms, the demographic groups targeted, and appropriateness for different applications. The annotations also include specific harms that occur in interactive contexts and harms that raise normative concerns when the “speaker” is an AI system. Due to its precision and granularity, FairPrism can be used to diagnose (1) the types of fairness-related harms that AI text generation systems cause, and (2) the potential limitations of mitigation methods, both of which we illustrate through case studies. Finally, the process we followed to develop FairPrism offers a recipe for building improved datasets for measuring and mitigating harms caused by AI systems.

-

Sharaf, A., Daumé III, H., & Ni, R. (2022).

Abstract: Machine learning models can have consequential effects when used to automate decisions, and disparities between groups of people in the error rates of those decisions can lead to harms suffered more by some groups than others. Past algorithmic approaches aim to enforce parity across groups given a fixed set of training data; instead, we ask: what if we can gather more data to mitigate disparities? We develop a meta-learning algorithm for parity-constrained active learning that learns a policy to decide which labels to query so as to maximize accuracy subject to parity constraints. To optimize the active learning policy, our proposed algorithm formulates the parity-constrained active learning task as a bi-level optimization problem. The inner level corresponds to training a classifier on a subset of labeled examples. The outer level corresponds to updating the selection policy choosing this subset to achieve a desired fairness and accuracy behavior on the trained classifier. To solve this constrained bi-level optimization problem, we employ the Forward-Backward Splitting optimization method. Empirically, across several parity metrics and classification tasks, our approach outperforms alternatives by a large margin.

-

Zhou, K., Blodgett, S. L., Trischler, A., Daumé III, H., Suleman, K., & Olteanu, A. (2022).

Abstract: There are many ways to express similar things in text, which makes evaluating natural language generation (NLG) systems difficult. Compounding this difficulty is the need to assess varying quality criteria depending on the deployment setting. While the landscape of NLG evaluation has been well-mapped, practitioners’ goals, assumptions, and constraints— which inform decisions about what, when, and how to evaluate—are often partially or implicitly stated, or not stated at all. Combining a formative semi-structured interview study of NLG practitioners (N=18) with a survey study of a broader sample of practitioners (N=61), we surface goals, community practices, assumptions, and constraints that shape NLG evaluations, examining their implications and how they embody ethical considerations.

-

Nguyen, K., Bisk, Y., & Daumé III, H. (2022).

Abstract: When deployed, AI agents will encounter problems that are beyond their autonomous problem-solving capabilities. Leveraging human assistance can help agents overcome their inherent limitations and robustly cope with unfamiliar situations. We present a general interactive framework that enables an agent to request and interpret rich, contextually useful information from an assistant that has knowledge about the task and the environment. We demonstrate the practicality of our framework on a simulated human-assisted navigation problem. Aided with an assistance-requesting policy learned by our method, a navigation agent achieves up to a 7× improvement in success rate on tasks that take place in previously unseen environments, compared to fully autonomous behavior. We show that the agent can take advantage of different types of information depending on the context, and analyze the benefits and challenges of learning the assistance-requesting policy when the assistant can recursively decompose tasks into subtasks.

-

Alvarez-Melis, D., Kaur, H., Daumé III, H., Wallach, H., & Wortman Vaughan, J. (2021).

Abstract: We take inspiration from the study of human explanation to inform the design and evaluation of interpretability methods in machine learning. First, we survey the literature on human explanation in philosophy, cognitive science, and the social sciences, and propose a list of design principles for machine-generated explanations that are meaningful to humans. Using the concept of weight of evidence from information theory, we develop a method for generating explanations that adhere to these principles. We show that this method can be adapted to handle high-dimensional, multi-class settings, yielding a flexible framework for generating explanations. We demonstrate that these explanations can be estimated accurately from finite samples and are robust to small perturbations of the inputs. We also evaluate our method through a qualitative user study with machine learning practitioners, where we observe that the resulting explanations are usable despite some participants struggling with background concepts like prior class probabilities. Finally, we conclude by surfacing design implications for interpretability tools in general.

-

Blodgett, S. L., Barocas, S., Daumé III, H., & Wallach, H. (2020).

Abstract: We surveyed 146 papers analyzing “bias” in NLP systems, finding that their motivations are often vague, inconsistent, and lacking in normative reasoning, despite the fact that analyzing “bias” is an inherently normative process. We further find that these papers’ proposed quantitative techniques for measuring or mitigating “bias” are poorly matched to their motivations and do not engage with the relevant literature outside of NLP. Based on these findings, we describe the beginnings of a path forward by proposing three recommendations that should guide work analyzing “bias” in NLP systems. These recommendations rest on a greater recognition of the relationships between language and social hierarchies, encouraging researchers and practitioners to articulate their conceptualizations of “bias”—i.e., what kinds of system behaviors are harmful, in what ways, to whom, and why, as well as the normative reasoning underlying these statements—and to center work around the lived experiences of members of communities affected by NLP systems, while interrogating and reimagining the power relations between technologists and such communities.