News

UMD Framework Used to Test Safety of Meta’s New Multimodal AI Model

UMD’s Furong Huang is co-leading efforts involving AI safety testing under simulated operational pressure.

How Generative AI Works—and Why It Can Mislead Users

GW’s David Broniatowski explains the pattern-based systems behind tools like ChatGPT, and the risks that come with them.

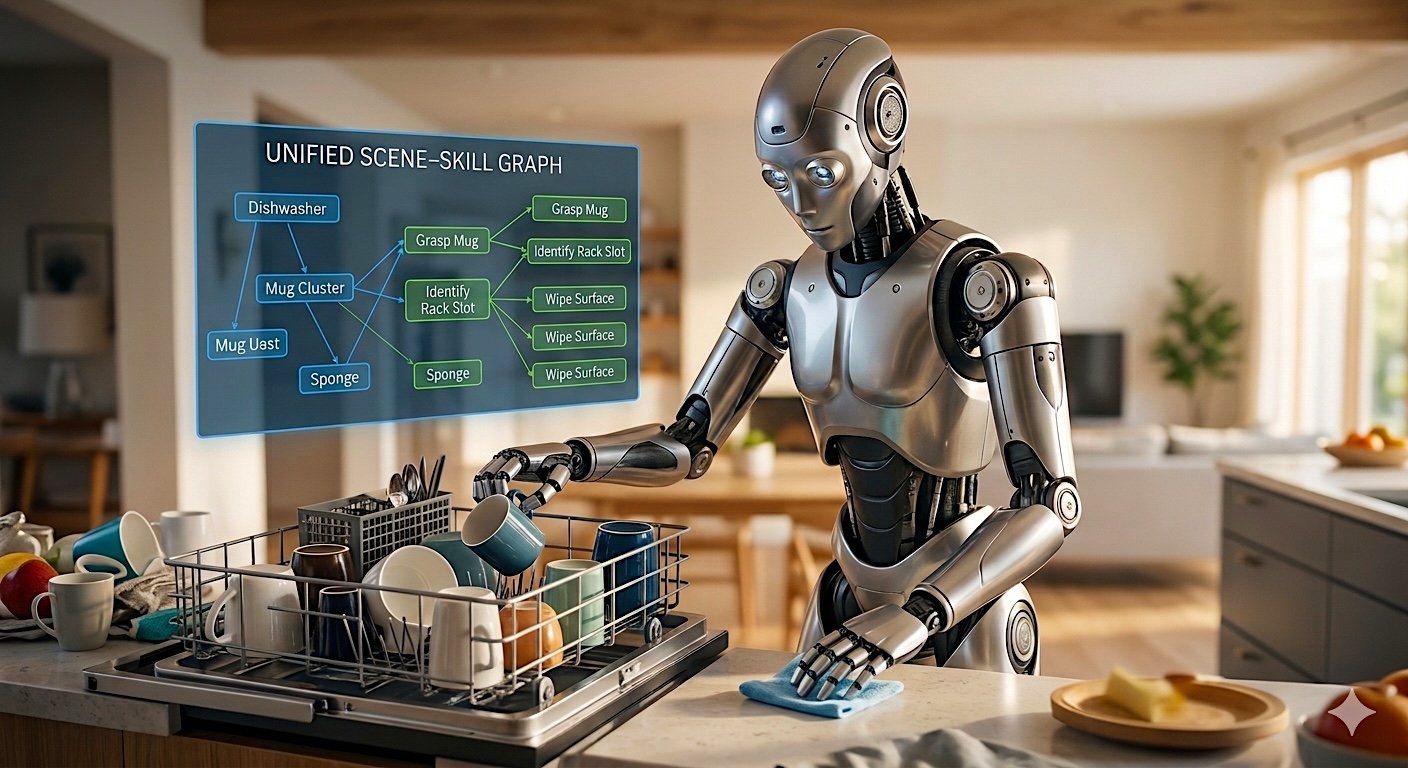

TRAILS Researchers Advance Robotics to Perform Complex Household Tasks

UMD’s Furong Huang and Tom Goldstein are advancing breakthroughs in trustworthy machine learning to create robotic systems that will excel in dynamic home environments.

A Building Code for Digital Infrastructures

GW’s David Broniatowski explains how the same infrastructural concern with AI keeps surfacing: a few companies shape how billions see information, speak, and organize.

AI-Enabled Manufacturing Will Change How, When and Where Goods Are Made

GW’s Susan Ariel Aaaronson explains how AI-enabled manufacturing is reshaping global production dynamics and risks, driving a new era of overcapacity.

AI’s Growing Energy Demands Raise Concerns About Infrastructure and Control

GW’s David Broniatowski is examining how AI systems interact not only with each other, but also with critical infrastructure and governance systems.

TRAILS Announces 11 Broader Impact Awards to Cultivate Next Generation of Trustworthy AI Leaders

New funding supports seed projects that incentivize a wide range of stakeholders to engage with and influence the future of AI.

Developing a Better Understanding of Global Participation in AI

UMD’s Maria Isabel Magaña talks about socio-cultural influences in AI development and governance.

What is Participatory Design in AI?

UMD’s Katie Shilton is leading research on participatory design to build trustworthy AI systems.

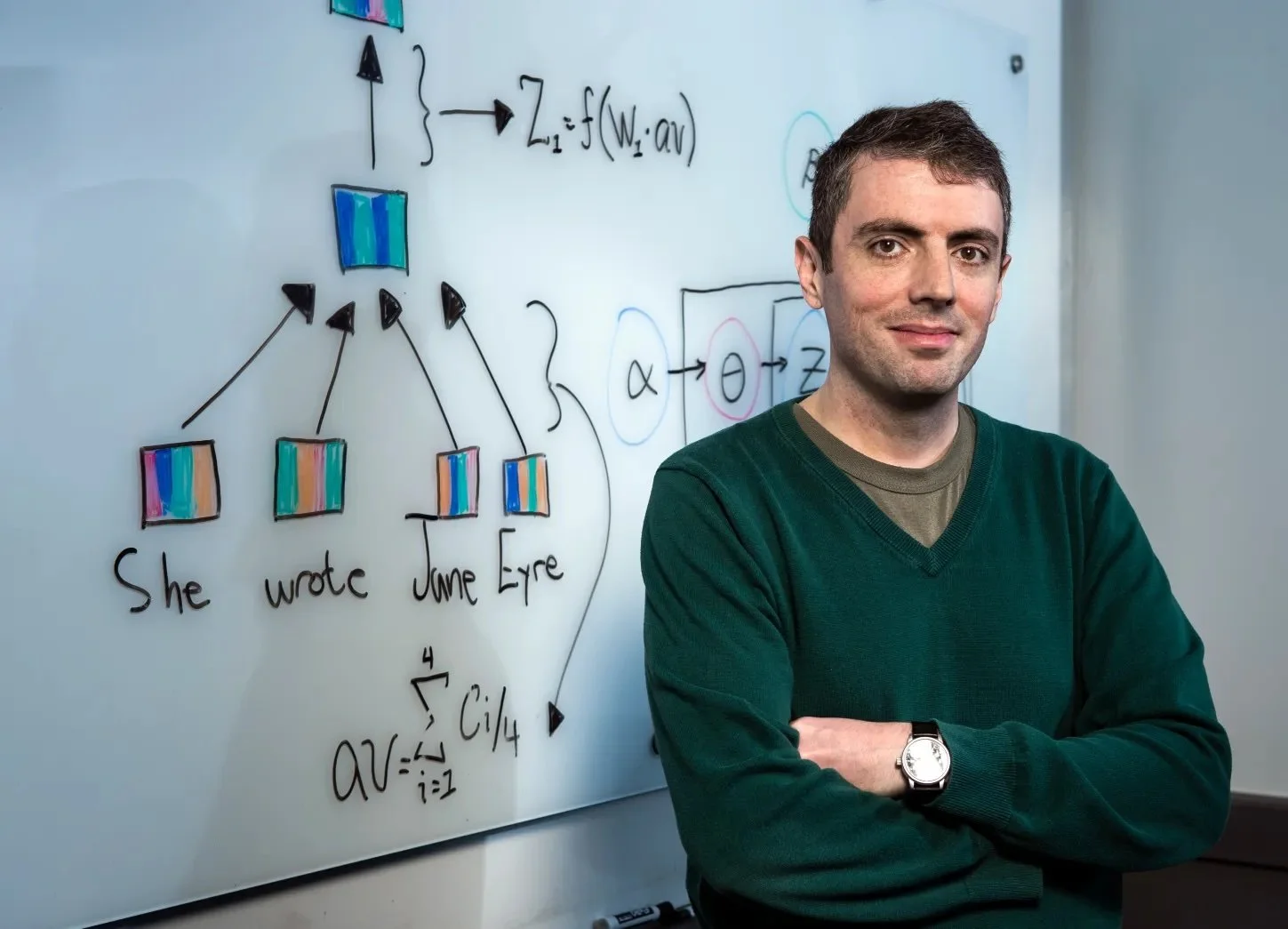

Improving AI-Human Interaction

UMD’s Jordan Boyd-Graber discusses how AI and humans can collaborate, highlighting ways AI could enhance—not replace—human abilities.

Helping Early-Career Researchers Navigate NSF Cybersecurity Funding

GW's Adam Aviv and UMD's Michelle Mazurek helped organize a workshop focused on developing competitive NSF proposals.

Study: Future AI Needs Stronger Safeguards

UMD’s Furong Huang co-led a study finding that today’s AI “guardrails” may fail to stop harmful behavior in more powerful future systems.

Huang Awarded Open Philanthropy Grant to Study Evasive AI Behavior

UMD’s Furong Huang will use the funding to create AI safeguards that distinguish between models that merely perform safe behavior and those that genuinely adhere to safety rules.

AI Goes to School for Math-Instruction Study

UMD’s Jing Liu is leading a three-year, $4.5M project to use AI and real-world classroom data to identify effective teaching practices and boost struggling middle-school math performance.

Building Trust in AI, One Block at a Time

UMD’s Sheena Erete, Hawra Rabaan, and Tamara Clegg, along with Morgan State’s Afiya Fredericks, are building a community-driven AI literacy program that empowers middle school students and their parents in resource-constrained communities.

GW-NASA System Wide Safety (SWS) Collaboration Advances GW Aviation Safety Research

GW’s Peng Wei led a NASA project that developed and flight-tested AI-driven safety systems to manage risks for drones and emerging aircraft, culminating in a NASA workshop at GW.

Humans and AI Join Forces

UMD researchers revamp quizbowl competition to better gauge trust and collaboration between people and machines.

UMD Faculty Member Co-Hosts Workshop to Advance Automated Speech Recognition Systems Used for Education

UMD’s Jing Liu helped organize the event, which focused on improving the volume and quality of speech datasets used for children’s education.

AI Already Beat Jeopardy’s Ken Jennings, Are Any of Us Safe?

UMD’s Jordan Boyd-Graber participated in a Q&A with The Baltimore Sun about AI singularity, stressing that while it’s unlikely in the near term, the greater concern is how bad actors are already misusing AI today.

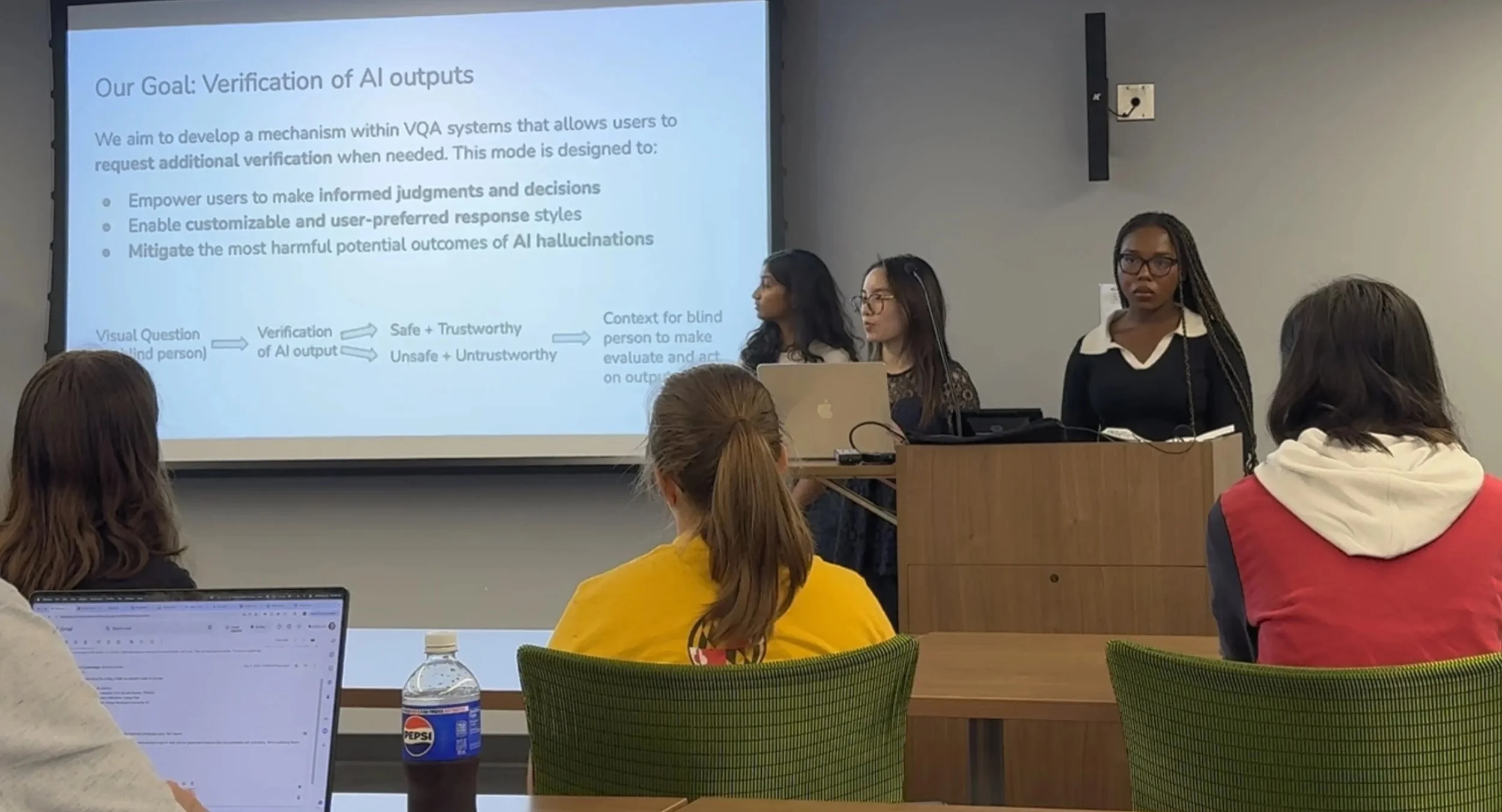

TRAILS Summer Fellows Develop Technology to Enhance Reliability in AI Responses for Blind Users

The five undergraduates spent 10 weeks at UMD working with the blind and low-vision community to develop a mobile app that adds verification layers to AI responses to make them more reliable and trustworthy.